|

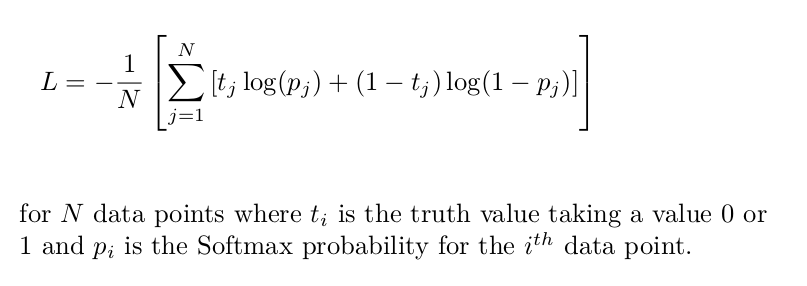

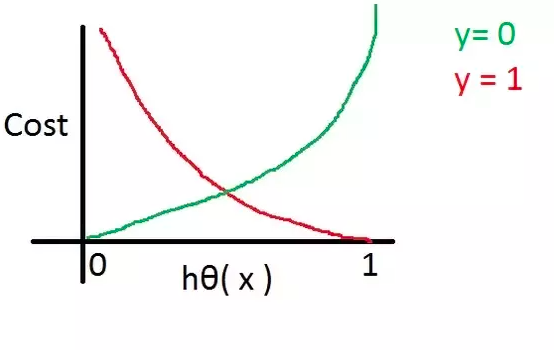

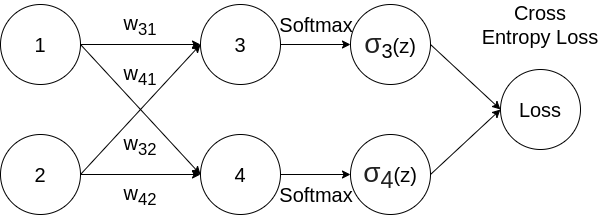

Function that measures Binary Cross Entropy between target and input logits. The Connectionist Temporal Classification loss. Loss functions binarycrossentropywithlogits. This criterion computes the cross entropy loss between input and target. Thresholds each element of the input Tensor.Īpplies the rectified linear unit function element-wise.Īpplies the HardTanh function element-wise.Īpplies the hardswish function, element-wise, as described in the paper:Īpplies the element-wise function ReLU6 ( x ) = min ( max ( 0, x ), 6 ) \text input ) of the input tensor).įunction that measures the Binary Cross Entropy between the target and input probabilities.įunction that measures Binary Cross Entropy between target and input logits. CPU threading and TorchScript inferenceĪpplies a 1D average pooling over an input signal composed of several input planes.Īpplies 2D average-pooling operation in k H × k W kH \times kW k H × kW regions by step size s H × s W sH \times sW sH × s W steps.Īpplies 3D average-pooling operation in k T × k H × k W kT \times kH \times kW k T × k H × kW regions by step size s T × s H × s W sT \times sH \times sW s T × sH × s W steps.Īpplies a 1D max pooling over an input signal composed of several input planes.Īpplies a 2D max pooling over an input signal composed of several input planes.Īpplies a 3D max pooling over an input signal composed of several input planes.Īpplies a 1D power-average pooling over an input signal composed of several input planes.Īpplies a 2D power-average pooling over an input signal composed of several input planes.Īpplies a 1D adaptive max pooling over an input signal composed of several input planes.Īpplies a 2D adaptive max pooling over an input signal composed of several input planes.Īpplies a 3D adaptive max pooling over an input signal composed of several input planes.Īpplies a 1D adaptive average pooling over an input signal composed of several input planes.Īpplies a 2D adaptive average pooling over an input signal composed of several input planes.Īpplies a 3D adaptive average pooling over an input signal composed of several input planes.Īpplies 2D fractional max pooling over an input signal composed of several input planes.Īpplies 3D fractional max pooling over an input signal composed of several input planes. Cross Entropy is definitely a good loss function for Classification Problems, because it minimizes the distance between two probability distributions.CUDA Automatic Mixed Precision examples.In: Proceedings of the 32nd International Conference on Neural Information Processing Systems, NIPS 2018, pp. For multi-class classification tasks, cross entropy loss is a great candidate and perhaps the popular one See the screenshot below for a nice function of cross entropy loss.

Zhang, Z., Sabuncu, M.R.: Generalized cross entropy loss for training deep neural networks with noisy labels. weighted exponential or cross-entropy loss converge to the max-margin model. In: The IEEE Conference on Computer Vision and Pattern Recognition (CVPR), June 2018 that can adapt to class imbalances by re-weighting the loss function during. Wang, H., et al.: Cosface: large margin cosine loss for deep face recognition. Cross-entropy loss, or log loss, measures the performance of a classification model whose output is a probability value between 0 and 1.

Panchapagesan, S., et al.: Multi-task learning and weighted cross-entropy for DNN-based keyword spotting. In our case, for a binary classification, the typical loss functions are. Also called logarithmic loss, log loss or logistic loss. It will return high values for bad predictions and low values for good predictions. In: Proceedings of the 33rd International Conference on International Conference on Machine Learning, ICML 2016, vol. Entropy of a random variable X is the level of uncertainty inherent in the variables possible outcome. Liu, W., Wen, Y., Yu, Z., Yang, M.: Large-margin softmax loss for convolutional neural networks. In: The Conference on Computer Vision and Pattern Recognition (2017)

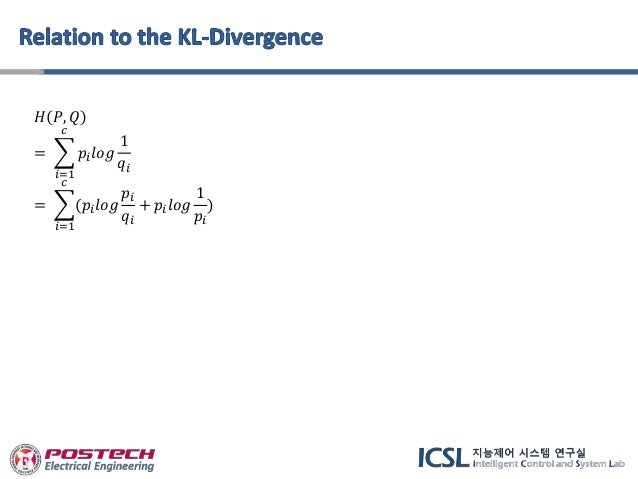

As mentioned above, the Cross entropy is the summation of KL Divergence and Entropy. Ĭhen, W., Chen, X., Zhang, J., Huang, K.: Beyond triplet loss: a deep quadruplet network for person re-identification. Cross entropy loss can be defined as- CE (A,B) x p (X) log (q (X)) When the predicted class and the training class have the same probability distribution the class entropy will be ZERO. loss np.multiply(np.log(predY), Y) np.multiply((1 - Y), np.log(1 - predY)) cross entropy cost -np.sum(loss)/m num of examples in batch is m Probability of Y. Chechik, G., Sharma, V., Shalit, U., Bengio, S.: Large scale online learning of image similarity through ranking.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed